They're telling you to cut headcount. Here's what they're not telling you.

92,000 tech workers cut in 2026. Most companies haven't redesigned the work. That's the problem.

Noise Free TL;DR

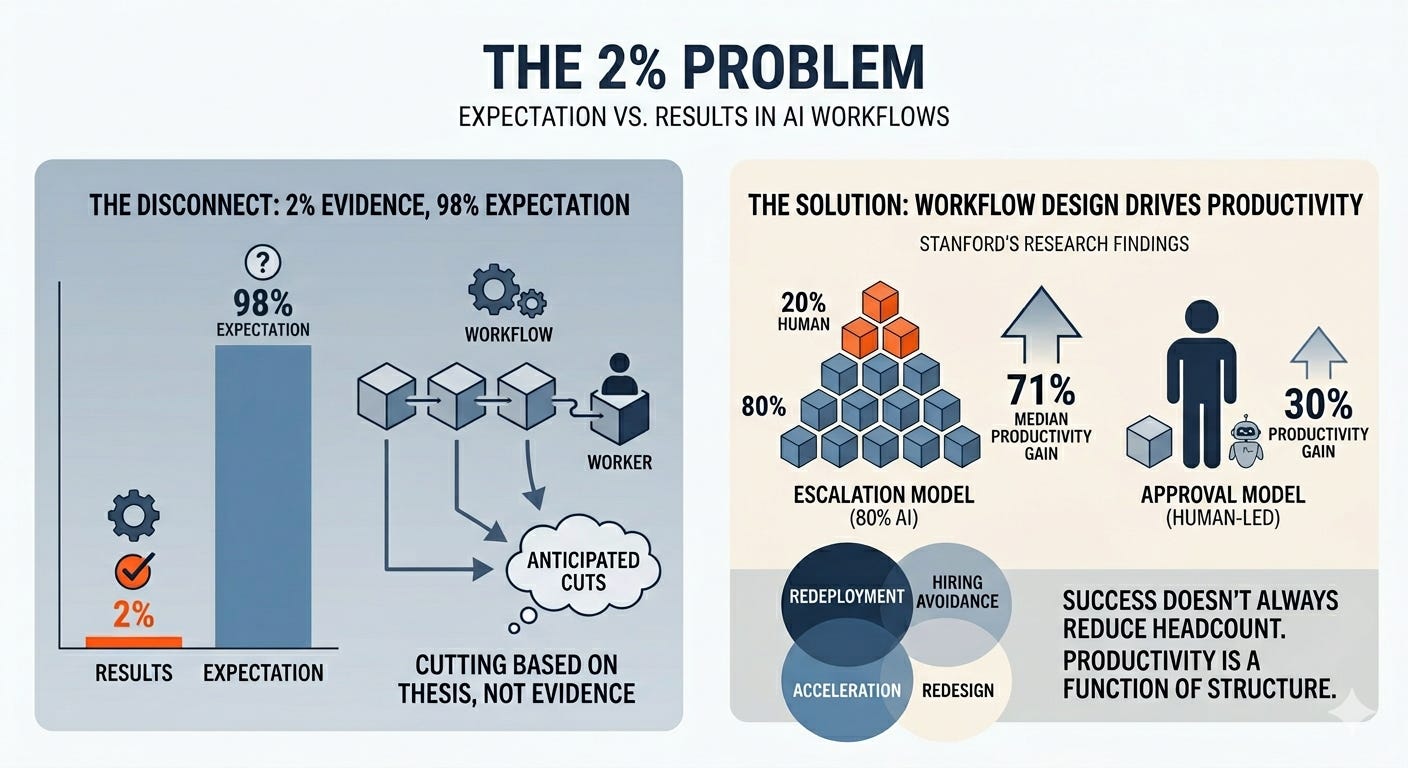

92,000+ tech workers have been laid off in 2026 so far, nearly half citing AI. But only 2% of companies have made large headcount reductions based on actual AI implementation results. Most are cutting before the technology works, not after.

The companies pulling ahead aren’t the ones cutting fastest. Stanford’s research on 51 real deployments found that workflows where AI handles volume and humans handle exceptions deliver 71% productivity gains. Workflows where humans do the work and AI assists? 30%. Same technology. Different architecture. Completely different result.

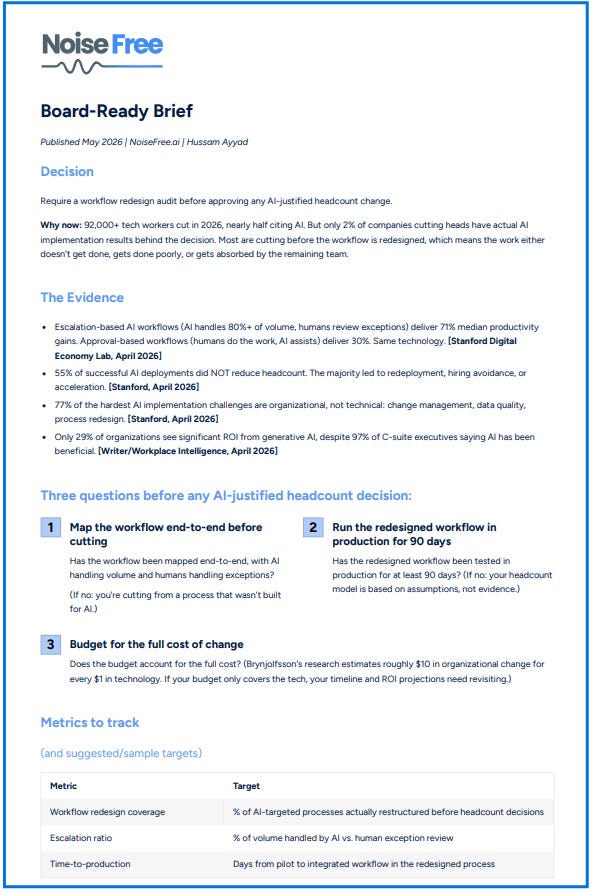

Before approving any AI-justified headcount change, require a workflow redesign audit. If you can’t show the redesigned workflow, you don’t have an AI strategy. You have a cost-cutting exercise with a technology alibi.

Only cost is your attention for the read. That said, I promise to make it worthwhile

Lead essay

On May 20, Meta will begin cutting 8,000 jobs. It’s the company’s third round of layoffs this year. The memo frames it as efficiency, a way to “offset the other investments we’re making.” Those investments? Somewhere between $115 billion and $135 billion in AI infrastructure spend for 2026.

Meta isn’t alone. Over 92,000 tech workers have lost their jobs in 2026 across roughly 98 companies. Oracle cut 30,000. Amazon cut 16,000 in January alone. Dell cut 11,000. Microsoft, for the first time in its 51-year history, launched a voluntary buyout program for experienced workers. Nearly half of these cuts have been explicitly attributed to AI.

Every board in every mid-market company is now watching this and asking the same question: should we be doing this too?

Here’s what the data actually says: probably not. At least, not the way it’s being done.

The 2% Problem

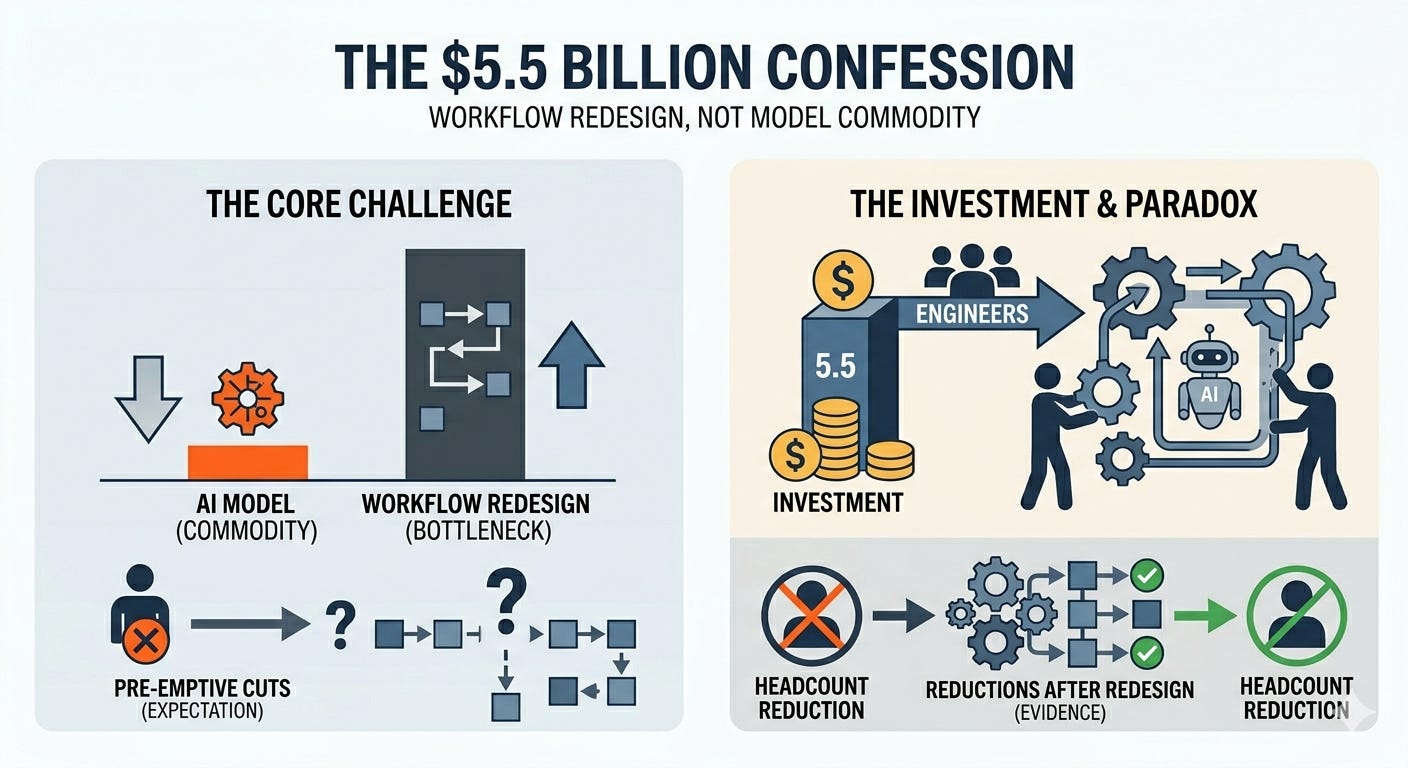

A Microsoft-commissioned survey of more than 31,000 workers found that just 2% of companies have made large headcount reductions based on actual AI implementation results. Two percent. The rest are cutting based on expectation, not evidence. They’re removing people from workflows that haven’t been tested, redesigned, or validated with AI in production. They’re making workforce decisions on a thesis, not a result.

This matters because the way you structure the work determines whether AI delivers anything at all.

In April, Stanford’s Digital Economy Lab published the Enterprise AI Playbook, a study of 51 real-world AI deployments across 41 organizations and 9 industries. It’s one of the few pieces of research that looks at what actually happened, not what was projected.

One finding stood out. Deployments using an escalation model, where AI handles 80% or more of the workload and humans review only the exceptions, delivered a median productivity gain of 71%. Deployments using an approval model, where humans do the work and AI assists, delivered 30%. Same underlying technology, in many cases literally the same vendor. Different workflow design. The productivity gap was entirely a function of how the work was structured.

The Stanford team also found that 55% of their successful deployments did not result in headcount reduction. The outcomes were more varied than the headlines suggest: some led to redeployment, some to hiring avoidance, some to acceleration (doing more with the same team). The 45% that did reduce heads were the ones that had already redesigned the workflow. They weren’t cutting people and hoping AI would fill the gap. They were cutting roles that the redesigned process genuinely no longer needed.

That distinction is everything.

The Sequence is Backwards

Because here’s the sequence most companies are running: cut headcount → deploy AI into the gap → discover the workflow wasn’t built for AI → watch quality degrade, cycle times increase, and the remaining team burn out absorbing unstructured work that the AI can’t handle. Then spend 12 to 18 months quietly rehiring, outsourcing, or watching customers notice.

The companies getting this right are running the opposite sequence: map the workflow → redesign it so AI handles volume and humans handle judgment calls → test the redesigned workflow in production → then, and only then, make headcount decisions based on what the new process actually requires.

This isn’t just a Stanford finding. OpenAI published its first enterprise usage report, B2B Signals, on May 5. It shows that frontier firms (95th percentile of AI usage) now use 3.5 times as much AI intelligence per worker as typical firms, up from 2x a year ago. But message volume, just using AI more often, explains only 36% of that gap. The rest comes from deeper integration: richer inputs, more complex tasks, agentic workflows where AI is doing the work rather than assisting with it. These firms aren’t winning because they have fewer people. They’re winning because their people are working inside redesigned processes that make AI do more of the heavy lifting.

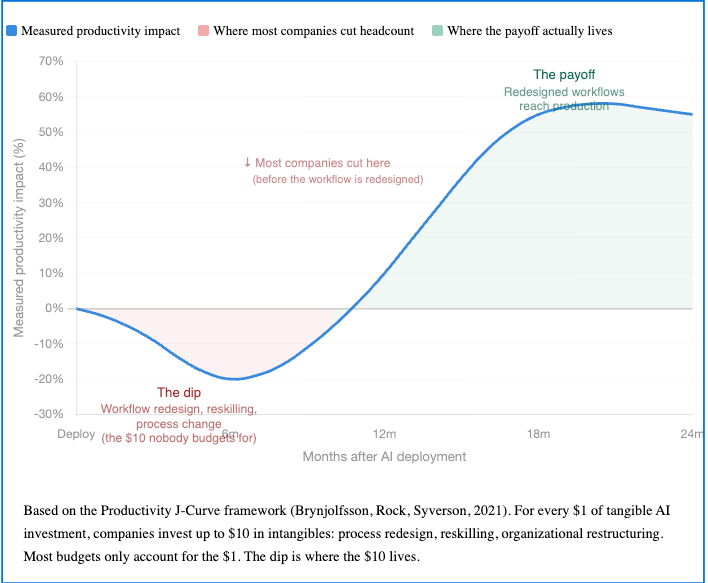

And then there’s the cost that nobody budgets for. Erik Brynjolfsson and colleagues at Stanford have documented what they call the Productivity J-Curve: general-purpose technologies like AI require significant complementary investment in intangibles (process redesign, reskilling, organizational restructuring) before productivity gains materialize. Earlier research estimated this complementary investment at roughly 10x the tangible technology spend. The Stanford Playbook confirmed this pattern. Across the 51 deployments, 77% of the hardest implementation challenges were intangible: change management, data quality, process redesign. The technology itself was consistently described as the easiest part.

So when a company budgets $5 million for an AI deployment and expects results in six months, they’re budgeting for the technology and ignoring the $50 million in organizational change that makes the technology work. When the results don’t appear, they blame the AI. Or they cut more people.

The $5.5 Billion Confession

This brings me to something I wrote about on LinkedIn last week. On May 4, both OpenAI and Anthropic launched billion-dollar enterprise services ventures, backed by Wall Street’s biggest PE firms, within hours of each other. The stated purpose of both ventures: embed engineers inside companies to redesign workflows and integrate AI into core operations. Goldman Sachs’ Marc Nachmann said it plainly: having the model alone doesn’t change your workflows or how you operate.

That’s the AI labs admitting what the data has been screaming. The model is a commodity. Workflow redesign is the bottleneck. And there aren’t enough people who know how to do it.

Which is ironic when you think about it. The same industry that’s cutting 92,000 workers because “AI can do the work” just invested $5.5 billion to hire humans to make AI actually work inside businesses.

I’m not saying AI won’t change your workforce. It will. The Stanford data is clear: 45% of successful deployments did reduce headcount. But those reductions came after the workflow was redesigned and tested, not before. They were a consequence of proven operational change, not a precondition for it.

The question isn’t whether to act. It’s whether you’re making headcount decisions based on a redesigned operating model or based on what Meta announced in a press release.

One of those shows up in your P&L. The other shows up in your regret.

Don’t let the headcount headlines intimidate you. Ignore the noise, and get your own sequence right.

Stay noise free.

Metric of the week

70% vs. 30%

Median productivity gains from escalation-based AI workflows (AI handles volume, humans handle exceptions) vs. approval-based workflows (humans do the work, AI assists). Same technology, different operating model.

Source: Stanford Digital Economy Lab, "The Enterprise AI Playbook: Lessons from 51 Successful Deployments," April 2026

Noise Free intel

1. Meta cuts 8,000, effective May 20. Third round of 2026 layoffs. Simultaneously projecting $115-135B in AI infrastructure spend. The company is reallocating payroll to data centers, not cutting because AI replaced the work.

Source: CNN, April 24, 2026; CNBC, April 24, 2026

2. OpenAI and Anthropic launch competing PE-backed services ventures. $5.5B combined. Both embed engineers inside companies to redesign workflows. Fortune’s headline: Anthropic is taking a shot at the consulting industry. The real headline: the model makers just admitted models aren’t enough.

Source: TechCrunch, CNBC, Fortune, May 4, 2026

3. OpenAI publishes B2B Signals, its first enterprise usage report. Frontier firms use 3.5x more AI intelligence per worker than typical firms, up from 2x in April 2025. 16x more Codex messages per worker. The gap is depth of integration, not breadth of deployment.

Source: OpenAI, May 5, 2026

4. OpenAI launches self-serve ad platform for ChatGPT. Targeting $2.5B in ad revenue for 2026, $100B by 2030. Expanding beyond the US to UK, Brazil, Japan, Mexico, South Korea. Worth watching: if your employees use ChatGPT on the free tier, their AI tool now has ads. The question of who pays for inference just got real.

Source: Axios, OpenAI, May 5, 2026

5. IBM Think 2026: “Redesign how the business operates.” IBM CEO Arvind Krishna’s keynote message: the enterprises pulling ahead are not deploying more AI; they’re redesigning how their business operates. IBM announced multi-agent orchestration (watsonx Orchestrate), sovereign AI controls, and real-time data foundations. The entire conference was themed around the gap between AI deployment and AI operating models.

Source: IBM Newsroom, May 5, 2026

[BONUS] Leader Q&A

The question execs are asking: “Our competitors are cutting headcount and citing AI. Are we falling behind by not doing the same?”

The answer they need: You’re not falling behind by keeping people. You’re falling behind if you haven’t redesigned the workflows those people sit inside. The companies reporting real AI ROI aren’t the ones that cut fastest. They’re the ones that restructured how work moves between AI systems and humans before making any headcount decisions.

Cutting without redesigning doesn’t create efficiency. It creates a capability gap you’ll spend 18 months trying to close, either by rehiring, outsourcing, or watching quality degrade until a customer notices.

The Stanford Playbook found that 61% of successful AI deployments were preceded by at least one failed attempt. The companies that eventually got it right treated those failures as learning opportunities about how work needs to be restructured. The ones that didn’t? They fired the AI lead and tried again with the same broken workflow.

If you’re under board pressure to have an “AI efficiency story,” here’s a better one than cutting heads: “We’ve redesigned three critical workflows, put AI into production handling 80% of volume, and our cycle times dropped by X%.” That’s a story backed by a system. Cutting 10% of your team because Meta did it is not a strategy. It’s mimicry.

Board-Ready Brief (1-Pager Summary)

You can download here (or click on the image below)

Thanks for reading Noise Free! Subscribe for free to receive new posts and support my work.

Images in this issue: curtesy of Google Gemini