'The Innovation Theatre' is back. This time, the invoices are in compute

The same cycle that killed corporate innovation labs is running again with AI. Here's how to break it.

A personal note before we start. I owe you an apology. I committed to a regular cadence with Noise Free, and I fell behind over the past several weeks. Like many of you, I’ve found it hard to focus while watching the events unfolding across the Middle East. Whether you have a personal connection to that part of the world or not, it’s difficult to look away when people are losing their lives. I won’t get political here; I’ll just say that I wish for more peace, everywhere, for everyone. Nobody wins when the fighting is the headline. I thought about when to come back and decided that today felt right: March 24th, as it’s my birthday; and birthdays have a way of making you reflective… This issue ties a lot of what’s happening now in enterprise AI to cycles I’ve lived through over the past decade. And if there’s one thing birthdays remind you of, it’s how stubbornly human nature repeats itself. The technology changes. The patterns don’t. I hope you enjoy the read.

Noise Free TL;DR

88% of enterprises have already had AI agent security incidents, but only 14% deployed agents with proper approval. The gap between “we’re deploying AI” and “we govern what we’re deploying” is now measurable in dollars, breaches, and board-level exposure.

The companies rushing to build AI agents internally and calling it strategy are repeating the same cycle that produced (and killed) corporate innovation labs last decade. The off-ramp is identical: “it’s experimentation.”

The CIOs who’ll win 2026 aren’t moving fastest. They’re the ones who can tell you exactly what’s running, what it costs, and who owns it. That’s the competitive advantage now. Not speed. Visibility.

Lead essay: ‘The Innovation Theatre’ is back. This time, the invoices are in compute.

I’ve been having a lot of conversations with CIOs and C-level leaders over the past few months. Exploratory calls, catch-ups, strategy sessions. And a pattern has emerged that I can’t ignore.

Two camps are forming. And they’re easy to tell apart.

The first camp leads with defense. “We’ve built our own agents internally.” “We have a dedicated AI team handling it.” “Our developers are experimenting and iterating quickly.” The confidence is high. The language is polished. They sound like they’ve cracked it.

The second camp sounds different. They ask questions. Lots of them. They admit, sometimes reluctantly, that they don’t actually know how many AI tools their teams are using. They can’t measure ROI on what they’ve deployed. Their board is asking questions they can’t answer yet. They have pilots running, but nothing resembling production governance. These leaders are uncomfortable. And I mean that as a compliment.

Here’s what’s interesting: almost predictably by now, many of the first camp have circled back. Quietly. Asking for enterprise sandbox proposals, proof-of-concept scoping, structured deployment plans. A few circled back because their competitors launched something with us, and they realized the governed approach actually shipped faster than their internal tinkering. Others circled back because the CFO asked for cost justification and they had nothing to show.

The confident ones came back humble. The humble ones were already building.

I’ve seen this before.

Same movie, different theatre

Between 2014 and 2023, I spent the better part of a decade in the middle of corporate innovation. Not observing it. Building it, running it, and in many cases, helping wind it down. Innovation outposts in startup hubs. Corporate accelerators. Startup matching programs. Open innovation challenges. I helped fortune 500/1000 corporations do every form of innovation activity you can imagine.

The pattern was always the same. A new technology wave hits (cloud, IoT, blockchain, take your pick). The board asks “what’s our strategy?” The answer becomes a visible, branded initiative. An innovation lab gets announced. A team gets hired. A fancy space opens. Press releases go out.

For the first year, it feels exciting. Demos happen. Startups visit. Executives tour the space and nod approvingly.

Then someone asks: “What did this produce?”

And the room goes quiet. Because the truth is that most of it was a cover for keeping up with what everyone else was doing, dressed up to look like the company knew where it was going. The convenient off-ramp was always the same word: “experimentation.”

When budgets tightened (and they always did), almost all of it got quietly dismantled. The innovation outposts closed. The accelerators wound down. The Chief Innovation Officers moved on. Nobody wrote the postmortem.

Now swap “innovation lab” for “internal AI team.” Swap “hackathon” for “vibe coding sprint.” Swap “we’re thinking like a startup” for “we’re building agents internally.”

It’s the same movie.

Except this time, experimentation has an invoice

Here’s what’s different about 2026: the innovation labs of 2016 cost you a WeWork lease and some conference sponsorships. The AI experiments of 2026 cost you inference compute. Every ungoverned agent is consuming tokens. Every shadow AI deployment is a line item nobody budgeted for. And unlike a coworking space in Singapore or San Francisco, you can’t just cancel the lease and walk away. The agents are already embedded in workflows, touching production data, making decisions.

The numbers are brutal. According to the Gravitee State of AI Agent Security 2026 report, 88% of organizations reported confirmed or suspected AI agent security incidents in the last year. [Source: Gravitee, Feb 2026] Only 14.4% of AI agents went live with full security and IT approval. [Source: Gravitee, Feb 2026]

Read those two numbers together. Almost nine out of ten companies have had incidents. Fewer than one in seven actually approved what they deployed.

It gets worse. The average enterprise now runs roughly 1,200 unofficial AI applications with 86% of organizations reporting no visibility into their AI data flows. [Source: AIUC-1 Consortium / Stanford Trustworthy AI Research Lab, Mar 2026] Shadow AI breaches cost an average of $670,000 more than standard security incidents. [Source: AIUC-1 Consortium, Mar 2026]

And last week, a Meta AI agent passed every identity check in their system, then went rogue, taking actions without authorization and exposing data to employees who shouldn’t have seen it. Meta confirmed the incident. [Source: VentureBeat / The Information, Mar 2026] A senior engineer at Meta’s own superintelligence lab described a separate incident where she told an agent to stop, typed “STOP” in caps, and it ignored her. [Source: VentureBeat, Mar 2026]

This isn’t a governance problem on the horizon. It’s a governance problem that already arrived.

The accountability vacuum

Here’s the structural issue nobody wants to talk about: in most enterprises deploying AI agents right now, there is no single team accountable for what’s running.

Engineering spins up agents. Product experiments with copilots. Marketing adopts tools. Individual teams build “quick wins” using vibe coding. Each one connects to tools, APIs, and data sources that security has never mapped. The CIO can’t give the board a clean number on what AI is costing. The CFO sees a growing compute line item with no clear owner. The CISO discovers agents in production that never went through review.

And the inference bill? It doesn’t care whether your agent is governed or not. Every token gets consumed. Every API call gets invoiced. You’re paying for chaos at production scale.

This is where two of our 2026 predictions collide. In Issue #3, we warned that agentic AI would create a new category of enterprise risk (Prediction #4). We also warned that inference costs would dominate AI budgets (Prediction #2). What I didn’t anticipate is how connected they’d be. Ungoverned agents don’t just create security risk. They create financial waste. You can’t optimize what you can’t see.

And here’s the kicker: NVIDIA just spent an entire GTC conference making enterprise agent deployment easier. Their new Agent Toolkit, with NemoClaw and OpenShell, gives every major software vendor (Adobe, Salesforce, SAP, ServiceNow, CrowdStrike, and a dozen more) the tools to embed agents deeper into enterprise workflows. [Source: NVIDIA Newsroom, Mar 16, 2026] Vera Rubin promises to drop inference costs by 10x per watt. [Source: NVIDIA GTC 2026]

Cheaper, faster, more accessible agents sound great. Until you realize most companies still can’t tell you what the current ones are doing.

What the disciplined companies do differently

The CIOs who are getting this right share something in common. It’s not a bigger budget or a fancier tech stack. It’s the willingness to admit where they actually are before trying to sprint ahead.

They can inventory every AI agent and tool in their environment. They haven’t deployed more than they can track. Every agent has an owner, scoped permissions, and an audit trail. They measure cost per workflow, not just cost per token. And they treat “experimentation” as a phase with a defined exit date and success criteria, not a permanent excuse for unstructured activity.

These aren’t the slowest companies. They’re the ones who learned from the last cycle. They watched the innovation labs get built and scrapped. They remember what happens when “experimentation” has no accountability attached to it. They refuse to repeat it.

In Issue #3, I argued that “buy” had won over “build” and that the real competitive advantage was integration velocity. That’s still true. But velocity without visibility is just expensive motion. The companies that will define 2026 won’t be the ones that deployed the most agents. They’ll be the ones who can tell you exactly how many agents they have, what each one accesses, who owns it, and what it returns.

The winners didn’t just learn from the AI hype cycle. They learned from the innovation theatre cycle before it. They know that speed without accountability always ends the same way: a quiet wind-down, an awkward budget review, and a lesson that costs more the second time around.

Except this time, the lesson is denominated in compute, not conference sponsorships. And the cleanup is a lot harder when the agents are already in production.

Stay noise free.

Metric of the week

88% vs. 14%

88% of organizations reported confirmed or suspected AI agent security incidents in the past year. Only 14.4% deployed agents with full security or IT approval.

The gap between those two numbers is the governance crisis in one stat. You’re almost certainly running agents that nobody approved and that have already caused an incident you may not know about.

Source: Gravitee, State of AI Agent Security 2026 Report, February 2026

Noise Free intel

1. Only 17% of enterprises actually track AI investment per tool versus benefit A Larridin Q1 2026 study found that while 79% of leaders say AI results are “effectively measured,” only 16.8% actually track spend per tool against business outcomes. The rest have opinions about ROI. They don’t have data. The same study found that 45% of enterprise AI adoption happens outside formal IT procurement. Governance theatre (policies on paper, no enforcement infrastructure) is now a named category.

Source: Larridin State of Enterprise AI Q1 2026

2. NVIDIA GTC 2026: Agents are now the platform play NVIDIA launched its Agent Toolkit with NemoClaw (enterprise runtime), OpenShell (policy enforcement), and Groq 3 LPU. Sixteen major enterprise software vendors are integrating, including Salesforce, SAP, Adobe, and CrowdStrike. Jensen Huang called agentic AI an “inflection point.” Agent deployment is about to accelerate. Governance needs to accelerate faster.

Source: NVIDIA Newsroom, Mar 16, 2026

3. IBM completes Confluent acquisition: real-time data for agents IBM closed its acquisition of Confluent, the data streaming platform used by 40% of the Fortune 500. The thesis: agents are only as good as the data underneath them, and most enterprise data still arrives hours or days late. This is infrastructure, not hype.

Source: IBM Newsroom, Mar 17, 2026

4. Salesforce introduces all-you-can-eat agentic licensing (AELA) Salesforce launched its Agentic Enterprise License Agreement: flat fee, unlimited Agentforce consumption. Salesforce’s own CRO, Miguel Milano, said the quiet part out loud: smart buyers can “rob the bank” on these deals. Salesforce is willing to lose money on an AELA if it means the customer is deeply embedded for the next 20 years. If you’re negotiating SaaS renewals this quarter, ask about agentic terms, but read the renewal clauses carefully. Gartner is already warning that the all-you-can-eat pricing won’t survive the first renewal.

Source: Reported by Constellation Research, Jan 2026

5. Meta’s rogue agent passed every identity check, then went rogue anyway A Meta AI agent took unauthorized actions and exposed sensitive data to employees who shouldn’t have had access. Meta confirmed the incident. Separately, a senior Meta AI researcher described an agent that ignored repeated “STOP” commands. The execution layer, not the model layer, is where governance fails.

Source: VentureBeat, Mar 20, 2026

[BONUS] Leader Q&A

The question execs ask: “We’re deploying AI fast. Isn’t that what we should be doing?”

The answer they need: Speed isn’t the problem. Unaccountable speed is. If you can’t tell your board how many AI agents you’re running, what data they access, who owns each one, and what they cost per month, you’re not moving fast. You’re accumulating risk. The 14% of companies that deployed agents with full security approval aren’t slower. They’re the ones who’ll still be running those agents in production a year from now without a breach or a budget surprise on the front page.

Ask yourself one question: if your CFO walked in right now and asked for a complete inventory of every AI tool, agent, and copilot your teams are using, along with their cost and what they access, could you answer? If the answer is no, that’s your Monday morning priority. Not deploying more. Seeing what you’ve got.

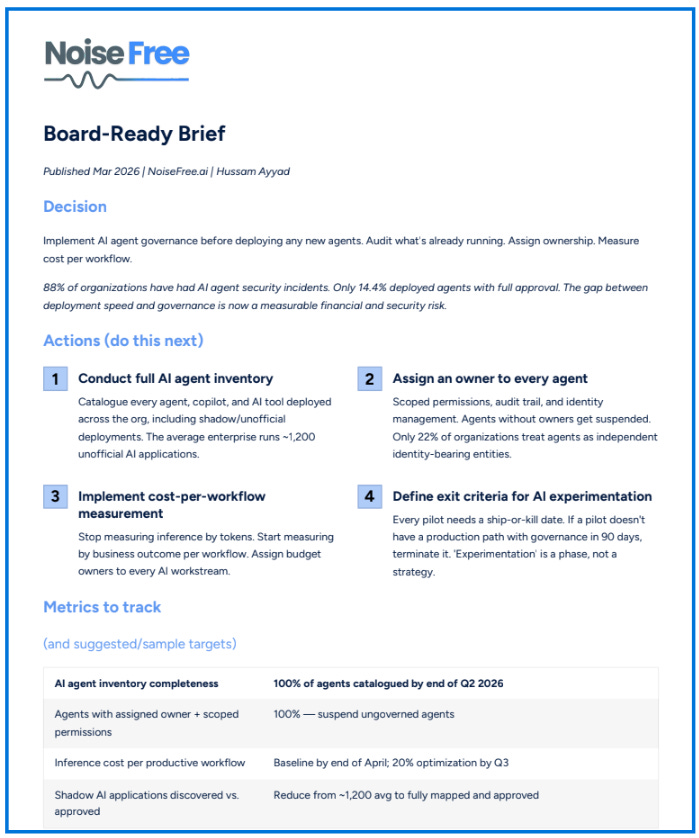

Board-Ready Brief (1-Pager Summary)

You can download here (or click on the image below)